|

I'm currently interested in high-dimensional probability and statistics, and in particular, its relation to modern machine learning. Part of this interest stems from the curiosities I have regarding why certain learning algorithms and models behave the way that they do, and why they are as successful as they are. Another part stems from the fascinating phenomena that are emergent in high-dimensional random models and their landscapes. I'm currently a final year PhD candidate at Stanford advised by Professor Andrea Montanari. Previously, I was a Master's student at MIT, where I worked in the MIT Institute for Data, Systems, and Society (IDSS) with Professor Caroline Uhler on causal inference and graphical models. I graduated from MIT with a B.S. in Computer Science and Electrical Engineering with a minor in Mathematics. Previously, I worked in the MIT Computational Cognitive Science Lab with Professors Josh Tenenbaum and Ilker Yildirim, and the Laboratory for Information and Decision Systems (LIDS) with Professor Bob Berwick. I was fortunate to receive support from the NSF Graduate Research Fellowship Program for my PhD. |

|

Research |

High-dimensional sharp asymptotics for multi-index models |

|

with Kiana Asgari and Andrea Montanari Under Review

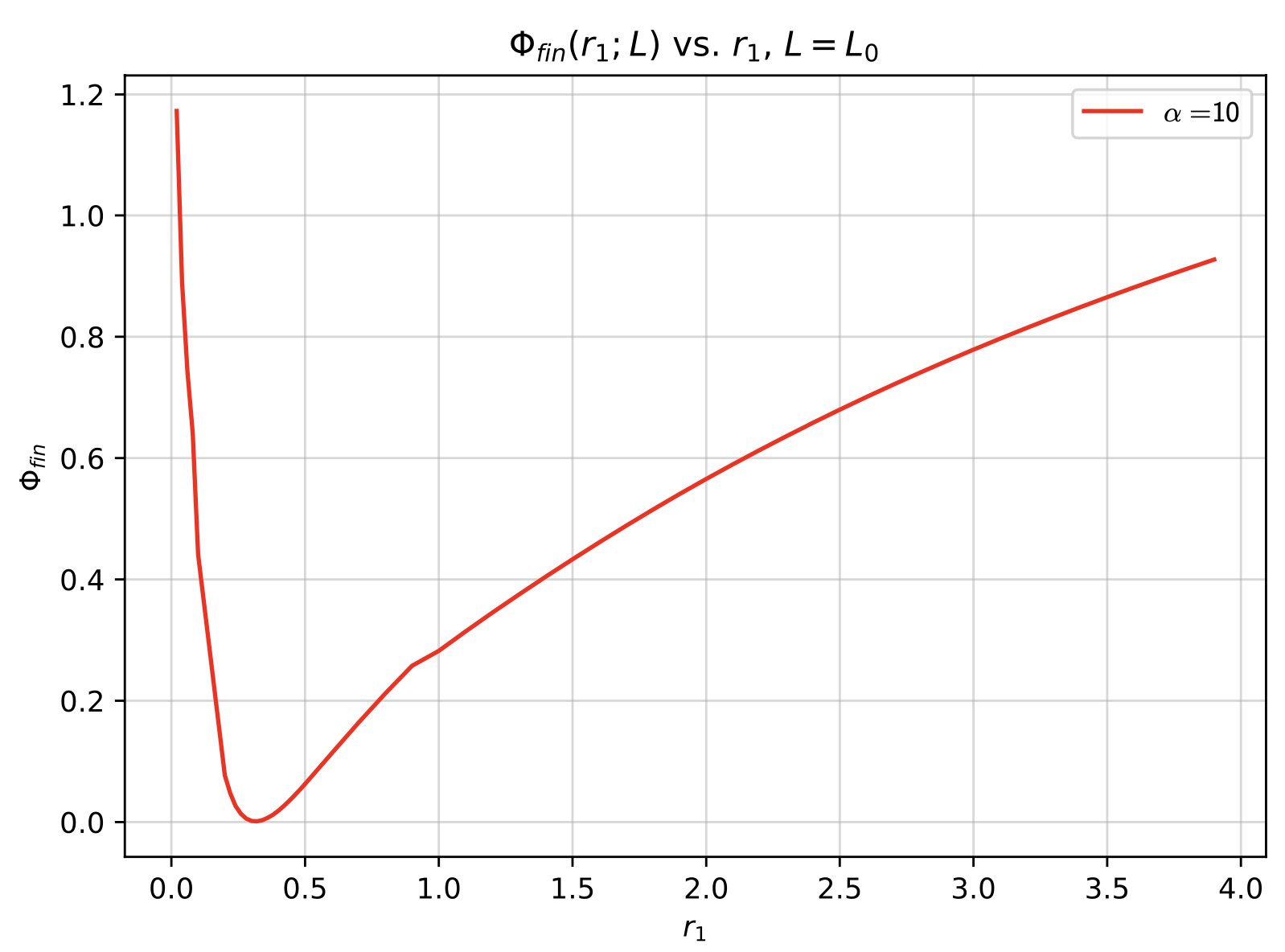

We develop an approach to study the local minima of the empirical risk in high-dimension that is versatile enough to capture non-convex, multi-index settings, overcomming challenges

that other approaches used for this purpose typically face.

|

High-dimensional kernels and random matrix theory |

|

with Theodor Misiakiewicz Under Review

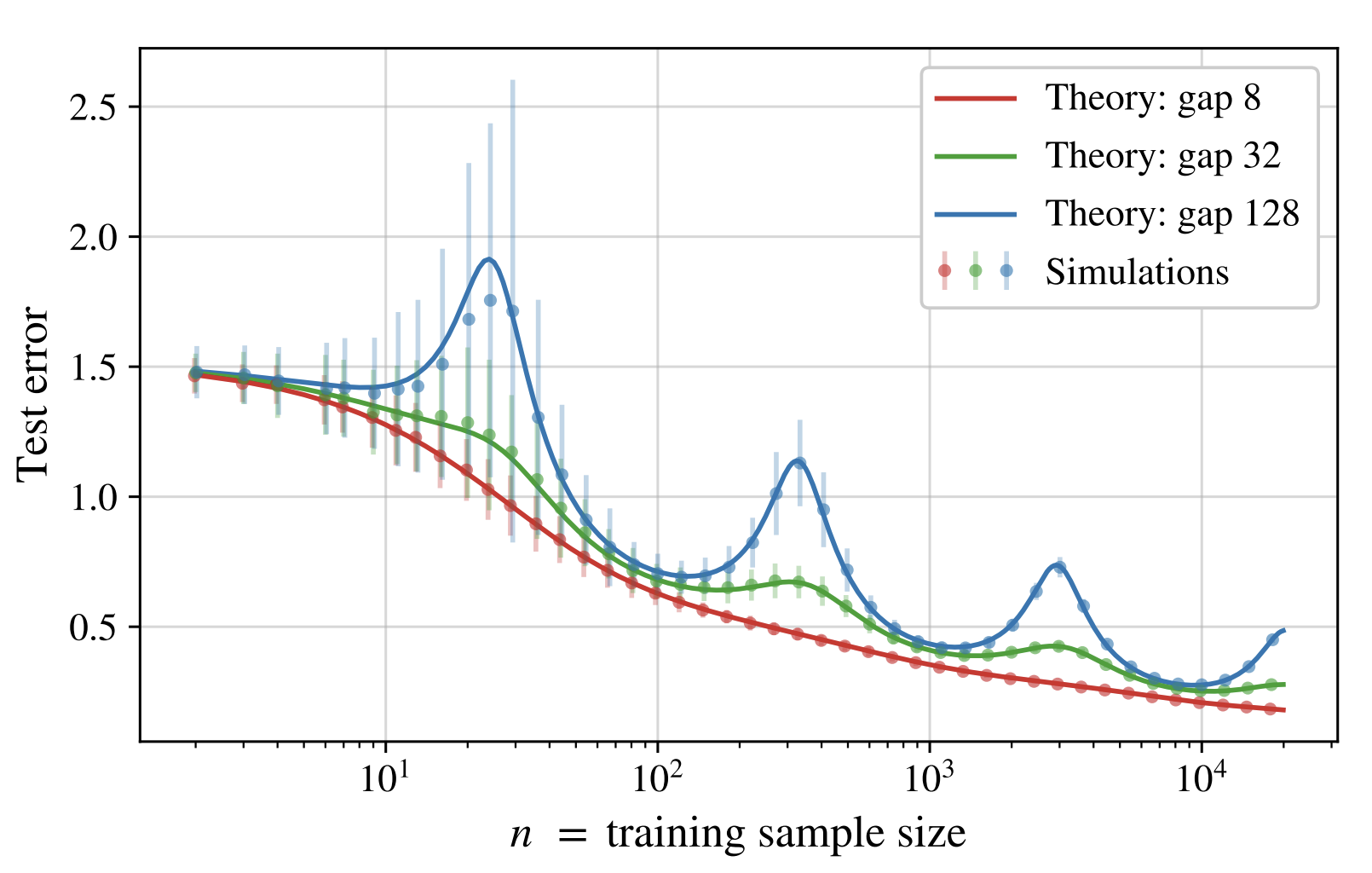

We study kernel ridge regression in the high-dimensional polynomial regime, and derive a non-asymptotic characterization for the test error and the GCV estimator.

This is done via the theory of deterministic equivalents in "infinite-dimensional" random matrix theory.

|

Universality and Invariance Principles in high-dimensions |

|

with Andrea Montanari Under Review

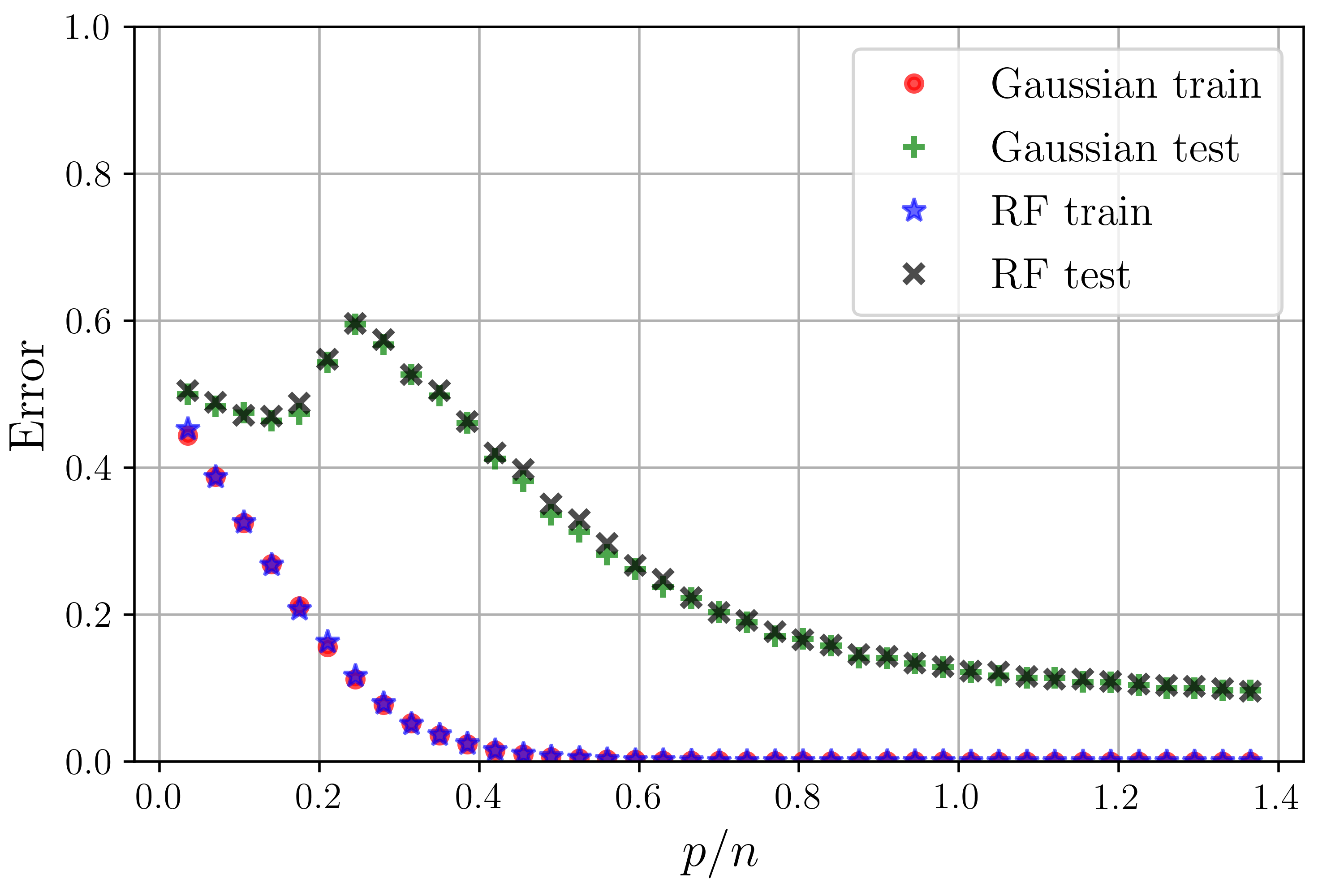

We give relatively general conditions on an empirical risk minimization problem under which the test and train error of the resulting estimator asymptotically depends only on the first and second moments of the

data distribution. This allows one to analyze a "Gaussian equivalent" model where the data is replaced with Gaussian data with matching first and second moments.

|

|

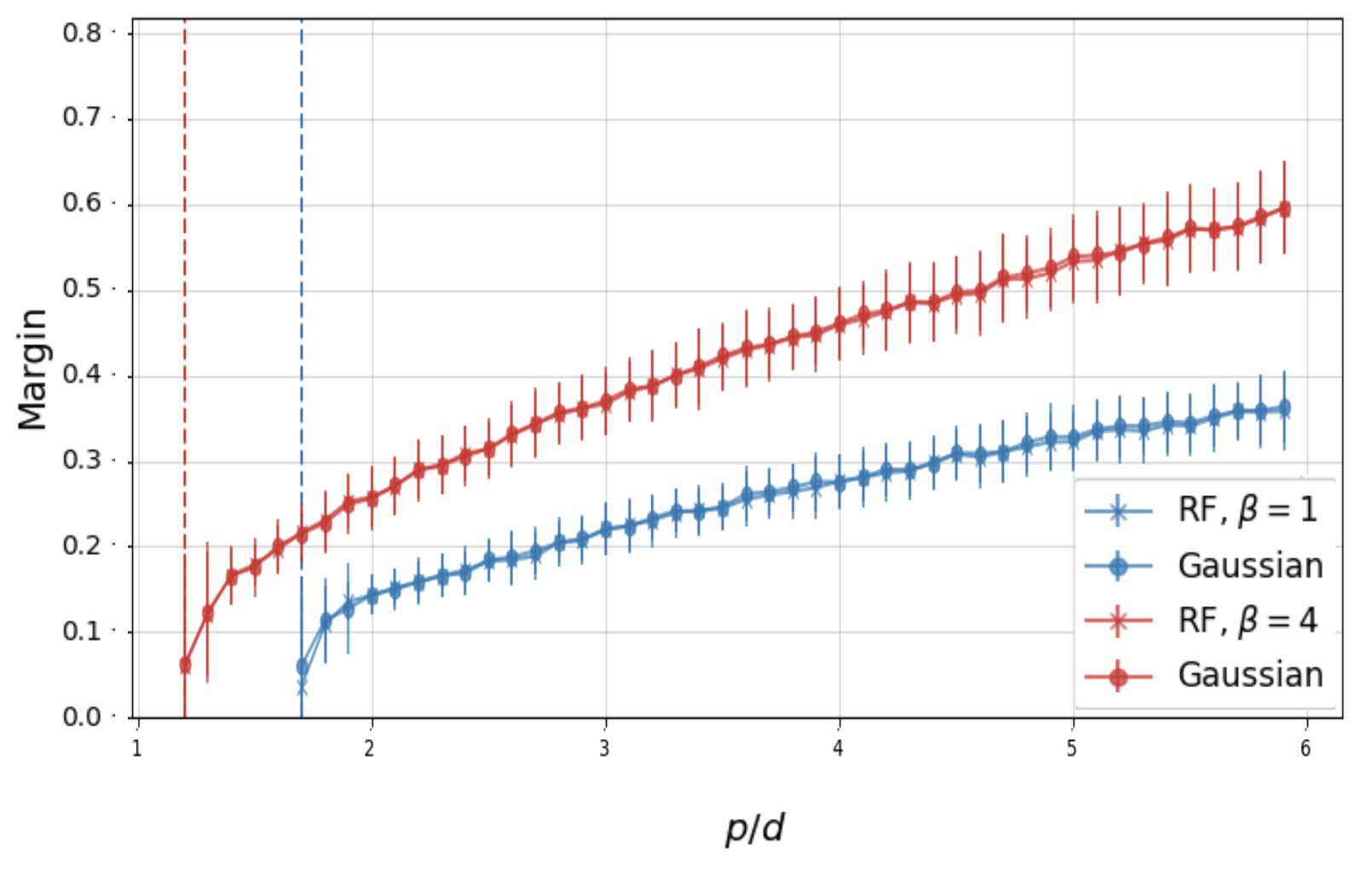

with Andrea Montanari, Feng Ruan and Youngtak Sohn Under Review

We extend universality to the min-max extremal problem of max-margin classification; namely, we show that the margin and the classification error asymptotically

depends on the data only through the first and second moments of the distribution. We show that this phenomenon holds due to a hidden averaging effect:

in the high-dimensional regime, the max-margin classifier is a maximization of an ERM-like problem where the average is over order sample-size many support vectors. |

Graphical Models and Causal Discovery |

|

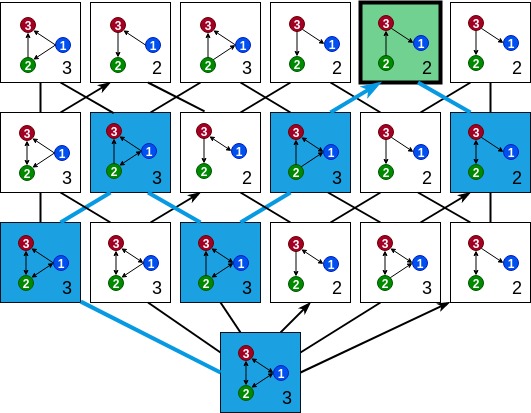

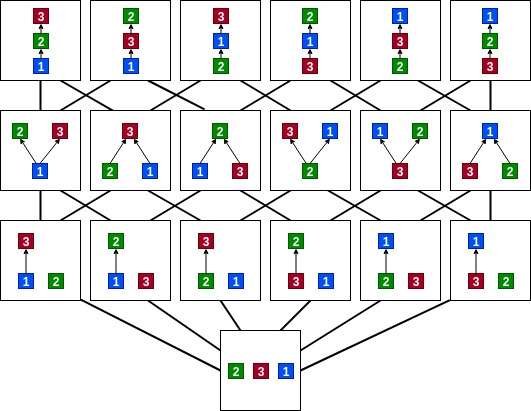

Master's Thesis

Develops a provably consistent score-based algorithm for causal discovery in the presence of latent confounders: given observed data from a mixed graph (representing a causal graph with latent confounders), the algorithm maps

to every poset the mixed graph that is most representative of the data among the ones compatible with the poset, and greedily searches

over the more constrained space of posets to find a graph that is Markov equivalent to the generating graph.

|

|

with Snigdha Panigrahi and Caroline Uhler AISTATS 2020

We provide theoretical guarantees on what can be learned from data generated from a mixture of DAGs, with limited knowledge about the mixture components

and the mixture proportions. We investigate what can be said about the components of the mixture and the membership of the data-points.

|

|

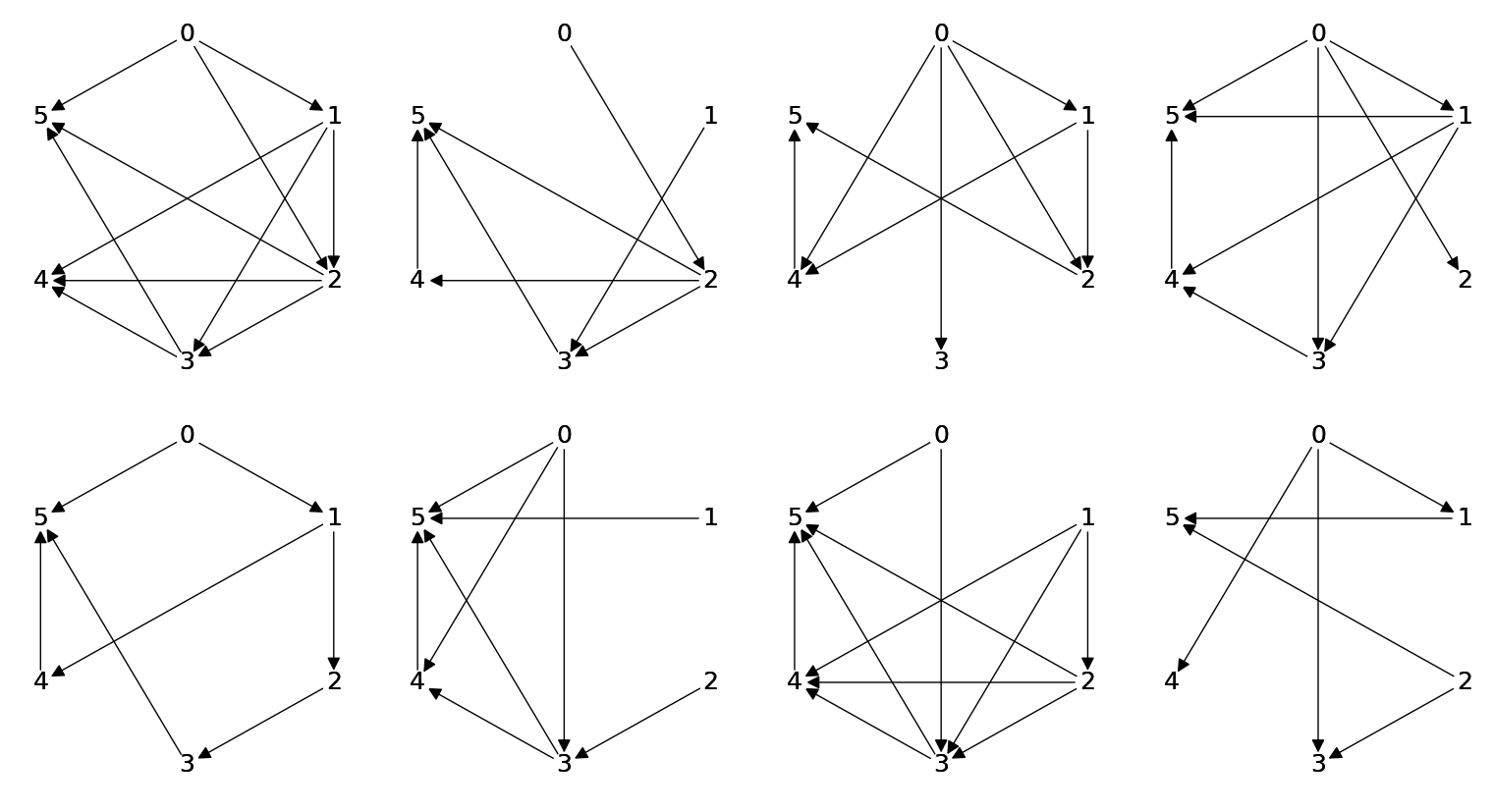

with Daniel Bernstein, Chandler Squires*, and Caroline Uhler ICML 2020

We show that learning a causal graph in the presence of latent variables (represented by mixed graphs),

can be cast as an optimization problem over the space of partial orderings of the set of observed variables.

We prove under assumptions weaker than faithfulness of the distribution to a mixed graph that any sparsest

independence map (IMAP) of the distribution belongs to the Markov equivalence class of

the true model. This motivates the Sparsest Poset formulation - that posets can be mapped

to minimal IMAPs of the true model such that the sparsest of these IMAPs is Markov

equivalent to the true model.

|

|

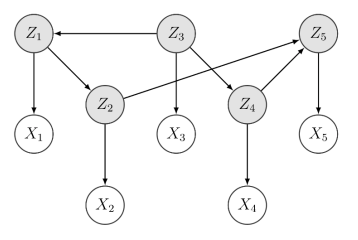

with Anastasiya Belyaeva, Yuhao Wang and Caroline Uhler UAI 2020 We develop a provably consistent procedure for learning a causal graph in the

presence of measurement error for a wide class of measurement noise models when the

noiseless variables are Gaussian.

We prove asymptotic consistency, discuss finite-sample considerations and demonstrate

our method's performance on simulated and real data to recover the underlying gene

regulatory network from zero-inflated single-cell RNA-seq data.

|

Task and motion plaining in physical problem solving, and its relation with the human concept of ``effort'' |

|

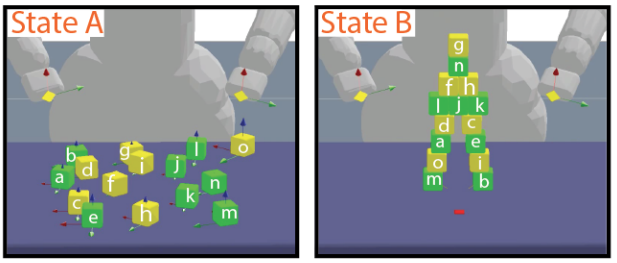

with Ilker Yildirim, Grace Bennett-Pierre, Tobias Gerstenberg, Joshua Tenenbaum and Hyowon Gweon CogSci 2019 We give a computational account of how humans judge the difficulty of a range of physical construction tasks

(e.g., moving 10 loose blocks from their initial configuration to their target configuration, such as a vertical tower)

by quantifying two key factors that influence construction difficulty: physical effort and physical risk.

|

|

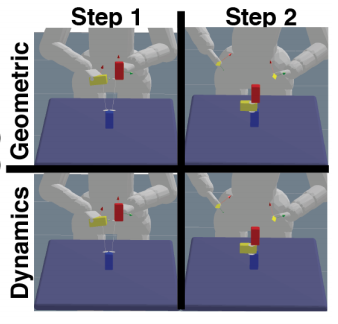

with Ilker Yildirim, Tobias Gerstenberg, Marc Toussaint and Joshua Tenenbaum CogSci 2017 We develop a model that plans over a symbolic representation of an object manipulation task, executes the plan using a geometric solver, and checks the plan's feasibility by taking into account the physical constraints of the scene, in an attempt to explain participants' actions and mental simulations when encountering such a task.

|

Teaching

|

Presentations

|

|

|